"The API of Me" in the age of AI

How do we control what we share with AI models? An API could help, if we get beyond the design challenges. Read: "Everything Starts Out Looking Like a Toy" #173

Hi, I’m Greg 👋! I write weekly product essays, including system “handshakes”, the expectations for workflow, and the jobs to be done for data. What is Data Operations? was the first post in the series.

This week’s toy: as you think about purchasing holiday gifts, consider toys that will last a lifetime. That doesn’t necessarily mean buying indestructible toys, and it does mean thinking about toys that promote creativity. Edition 173 of this newsletter is here - it’s November 27, 2023.

If you have a comment or are interested in sponsoring, hit reply.

The Big Idea

A short long-form essay about data things

⚙️ "The API of Me" in the age of AI

Our computing ability intersects with our own personal dataset to create new and differentiated solutions with AI at the center. But it’s confusing to know whether it’s safe or dangerous to interact with these solutions.

How do we know what we’re sharing? These systems are in a cloud environment that we don’t control and are subject to a user agreement that most non-lawyers will have trouble comprehending.

About a decade ago I wrote an essay on the API of Me - a description of how we could take back control of our personal preferences while keeping space for commercial use.

What is “an API of Me”?

An API is an Application Programming Interface - a way for computers to speak to each other and share a “handshake” that allows them to broadcast how they will request and respond to information.

Understanding the information blueprint provided by an API lets you know how to communicate with another system.

There’s a lot of stuff going on in the background when we use these systems. I believe we need to design these interfaces to better direct consumer choice to match the outcomes they want.

As consumers, we don’t have an easy way of expressing our preferences across applications, devices, and situations. We use operating system preferences (Apple or Android), application preferences (depending on an individual application), and add-on preferences (ad blockers or VPN programs).

We need a solution that takes our preferences into account.

Why does this matter?

AI models will soon be present on local, private devices. Understanding the data contract we make with applications is even more important when we think about the data we’re sharing intentionally and inadvertently.

The original essay made points that resonate today:

Online identity is controlled by companies, not individuals

People have a right to know how their information is being used

Companies need to share how customer information is being used

Customers need a way to control the information they share with companies

We need to minimize the inappropriate use of this information

Authentication and authorization shouldn’t depend on a single source

A decade later, these questions are not solved.

We have many of the parts we would need to create a solution that lets people understand and control the information they share with the larger world while maintaining the ability to build commercial products on top of this structure.

Towards an API of Me

If we wanted to build an API of Me today, what are some key building blocks to address?

Learning and usability - The biggest obstacle to an “API of me” is a design hurdle.

It’s confusing to know what information you are making available, for what period of time, to whom, and where it might be used.

A local AI, running only on your device, might be the breakthrough we need to be able to explain the choices you are making with your data so that you are more informed. (Of course, you’ll need to narrow the context and set rules so that it accomplishes what you need.)

Authentication - you need to prove that you are who you say you are. You’d need a multi-factor auth scheme that combines something you know (a password) with something you generate (a device password, run locally) with some other factor (a passphrase, a physical key, or similar).

I’m on the fence about biometrics and hopeful that they can be secured on-device so that you could use a thumbprint or a facial scan if you want.

Authorization - you need to know what you are allowed to do, based on who you are.

This one’s the most tricky of the available items, mostly because authorization comprises policies that combine the following concepts:

Resources - the combination of objects and attributes you might share with the world (my shoe size, physical address, or ability to read my banking account balance are only a few examples)

Roles - these might be trusted individuals, one-time access, or automation handlers

Actions - does a policy allow read, write, update, or have no access?

Preferences - when you establish a preference (for example, preferred subscription length or ads I’d prefer not to see), how do you link that preference to resources or roles so that it can be reused?

Time - are requests and responses time-limited, rate-limited, or otherwise time-bound (e.g. only possible for a period of x days)

Requests - what are the interfaces presented to the world for a request?

Responses - based on the role of the requestor and the policy, how are responses blocked or allowed, and what information do they contain?

There’s a lot of ground to cover, but much of this sounds like the work we’ve done to build systems into local and enterprise software.

If the API of Me is easier to configure than the equivalent choices presented by Apple and Google, we’ll have a truly personal experience while protecting our information in the ways we expect.

What’s the takeaway? Locally run AI models – with the right context – provide a promising way to inform consumers about the information they share (or choose not to share) with third parties. The trick? Figuring out a way to be transparent when AI agents communicate with other AI agents.

Links for Reading and Sharing

These are links that caught my 👀

1/ Design ftw - I enjoyed this essay by Rei Inamoto on the importance of innovative design to drive products forward. When the underlying components of a solution are commodities, how do you create a differentiated result? One way is a breakthrough design.

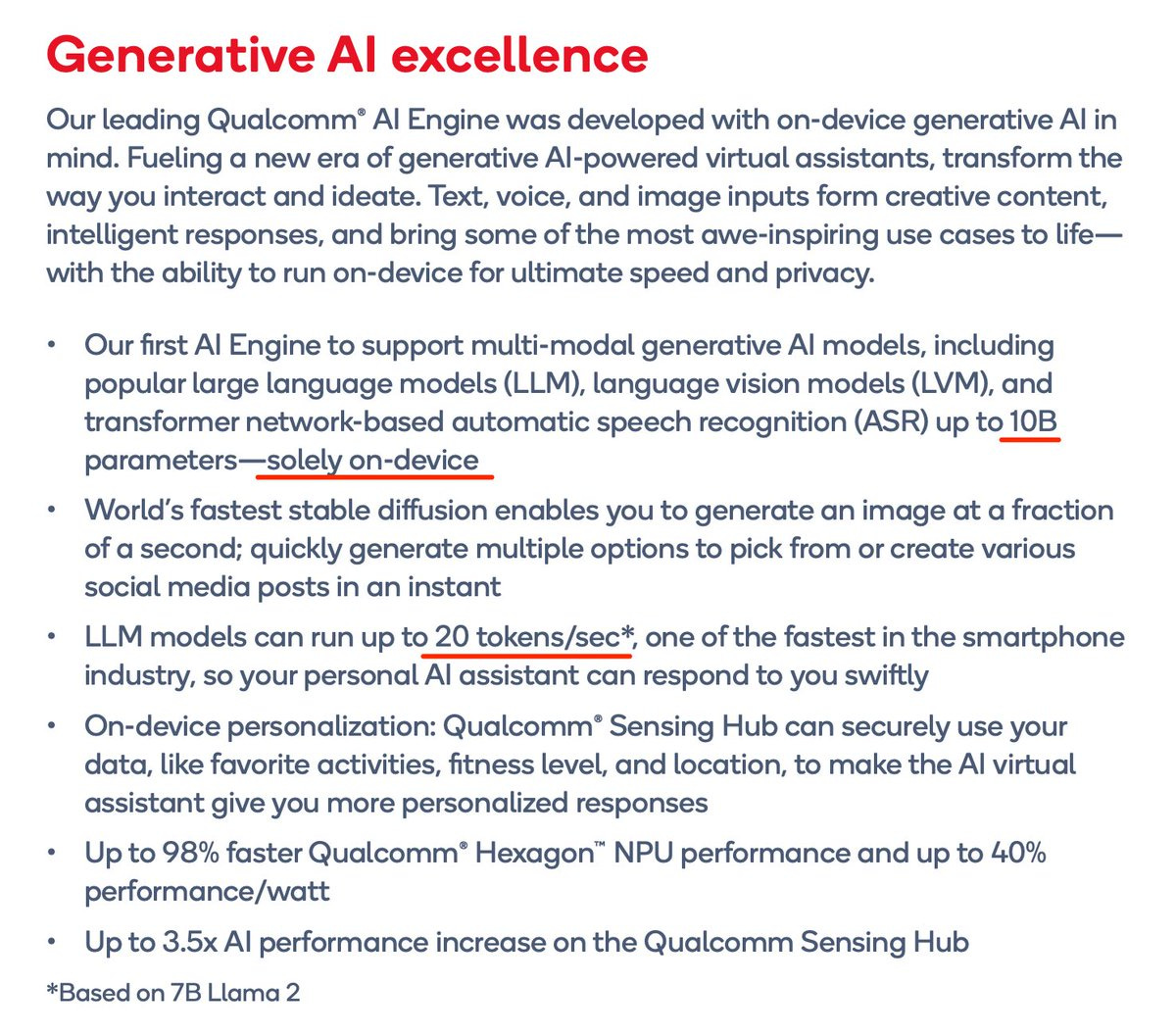

2/ LLM on your phone - An attentive viewer on Twitter noticed that Qualcomm has created a local AI model that works on your device. That means features that currently exist in the cloud will happen faster and more securely on your local phone.

This raises an interesting question - will we all have slightly different AI models in the future, tuned to our own behaviors? Signs point to yes.

3/ What does it mean to create an AI feature? - Benedict Evans discusses how to unbundle AI and the challenge of asking a pattern-matching system to build a consistent feature. Context is everything, as a prompt executed in one set of instructions won’t deliver the same response when two different people run it. How do you get a consistent output from AI? It’s going to take some significant context setting.

What to do next

Hit reply if you’ve got links to share, data stories, or want to say hello.

Want to book a discovery call to talk about how we can work together?

The next big thing always starts out being dismissed as a “toy.” - Chris Dixon